Read about NeutronTriage as a game changer in diagnosis: https://blog.neutrontech.ai/2026/04/11/the-day-the-cloud-died-why-neutrontriage-is-the-offline-first-on-device-revolution-for-global-health/

For over a decade, the Cloud has been the default foundation of modern computing. It promised scalability, flexibility, and global access. And for many applications, it delivered. It has been assumed that the Cloud is here to stay and has therefore been presented as the standard whether Public, Private, Hybrid, or Community. Here’s the infallible fact so far;

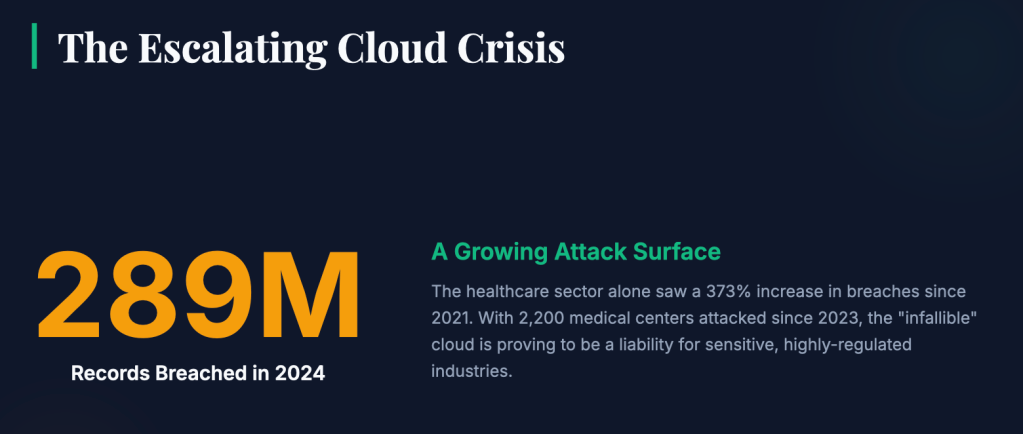

289Million individual healthcare records were breached in 2024 which was a 373% step-up since 2021. Change Healthcare recorded the largest so far in the history of the US from a single healthcare system in 2024 affecting around 192.7 million victims. 2025 wasn’t spared as even as there was a drop in the magnitude per breach – approximately 57–63 million individuals affected. Nationally, 2,200 medical centers have faced cyberattacks since 2023. The 7hour AWS outage in October 2025 disrupted healthcare systems affecting EHRs, telemedicine, and billing platforms, resulting in a $62,500 per hour in losses. Cloud is a stretch of an attack surface. This is only in the healthcare sector because they are obliged to report to authorities, think of the financial sector, military, and other highly regulated and compliance industries.

The Artificial Intelligence Era

Recent Artificial Intelligence aren’t immune from these attacks and As AI systems move from experimental tools to mission-critical infrastructure, the assumptions that made the cloud dominant are beginning to break. Latency becomes a liability. Connectivity becomes a risk. And data sovereignty becomes non-negotiable. A new paradigm is emerging – one where intelligence is no longer centralized, but distributed. Not dependent, but autonomous. This is where the future of AI begins.

The Problem with “Bolt-On AI”

Most AI systems today were not designed from first principles. Instead, they were added—layered onto existing software stacks through APIs and cloud services. This “bolt-on” approach has three fundamental flaws:

1. Latency at the Wrong Time

When intelligence depends on remote servers, every decision requires a round trip. In real-world environments—hospitals, industrial systems, field operations—milliseconds matter. Delays aren’t just inconvenient; they can be critical.

2. Fragile Connectivity Assumptions

Cloud-based AI assumes constant internet access. But in many environments, connectivity is unreliable, intermittent, or intentionally restricted. When the connection drops, so does the intelligence.

3. Loss of Data Control

Sending sensitive data to third-party systems introduces regulatory, security, and operational risks. As global data laws tighten, organizations are being forced to rethink where – and how – their data is processed.

The result is a growing mismatch: AI is becoming more important, but the infrastructure supporting it is increasingly misaligned with real-world needs.

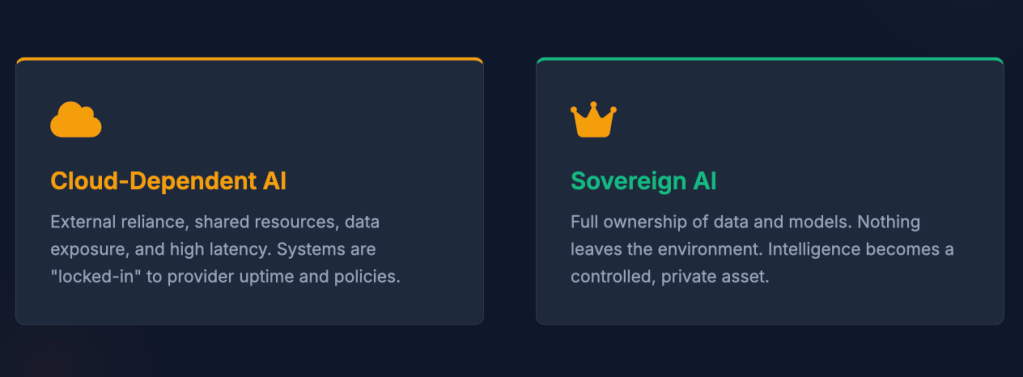

From Cloud AI to Sovereign AI

The next evolution of artificial intelligence is not just about better models – it’s about better architecture.

Sovereign AI represents a shift in control. Instead of relying on external providers, organizations retain full ownership over their data, models, and insights. Nothing leaves their environment unless they choose it to.

This isn’t just a technical improvement—it’s a strategic one.

- Healthcare providers can process patient data without external exposure

- Defense organizations can operate in fully disconnected environments

- Enterprises can meet regulatory requirements without compromise

Sovereign AI transforms intelligence from a shared resource into a controlled asset.

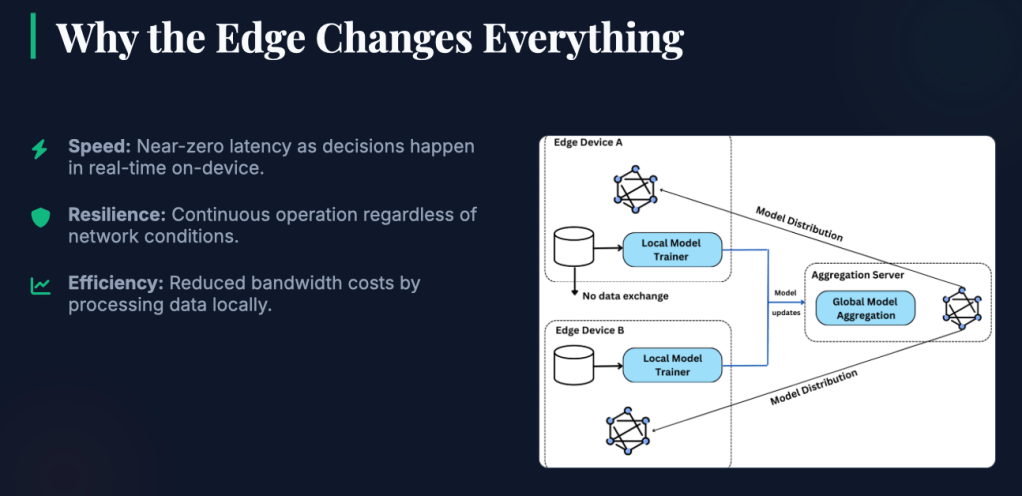

If the cloud centralized computing, the edge decentralizes it.

Edge-native AI moves processing closer to where data is generated—on devices, within facilities, and inside operational environments. This shift unlocks three critical advantages:

Speed

By eliminating the need to send data to distant servers, edge systems achieve near-zero latency. Decisions happen in real time, not seconds later.

Resilience

Edge systems don’t depend on continuous connectivity. They continue operating regardless of network conditions.

Efficiency

Processing data locally reduces bandwidth costs and minimizes unnecessary data transfer. This is not just an optimization. It’s a redefinition of how intelligence operates.

Offline-First: Designing for Reality, Not Assumptions

One of the most overlooked truths in modern computing is this: connectivity is not guaranteed. In controlled demos and urban environments, “always connected” systems work fine. But step into a rural clinic, a secure facility, or a remote field site, and that assumption quickly breaks down.

Offline-first AI flips the model.

Instead of treating disconnection as an edge case, it treats it as the baseline.

- Systems are fully functional without internet access

- Data is processed locally, in real time

- Connectivity, when available, becomes an enhancement—not a requirement

This approach ensures continuity. Workflows don’t stop. Decisions don’t wait. Intelligence doesn’t disappear.

The Rise of AI-Native Infrastructure

To fully realize sovereign, edge-native, and offline-first capabilities, a new kind of platform is required.

Not an extension of legacy systems.

Not a wrapper around third-party APIs.

But an engine built specifically for AI.

AI-native infrastructure is designed from the ground up with intelligence at its core. Every layer – from data processing to model execution—is optimized for local, high-performance operation.

This architecture eliminates:

- Dependency on external APIs

- Hidden latency from cloud routing

- Security gaps introduced by third-party integrations

- “AI amnesia” caused by stateless, session-based systems

What replaces it is a persistent, high-performance intelligence layer that operates consistently across environments.

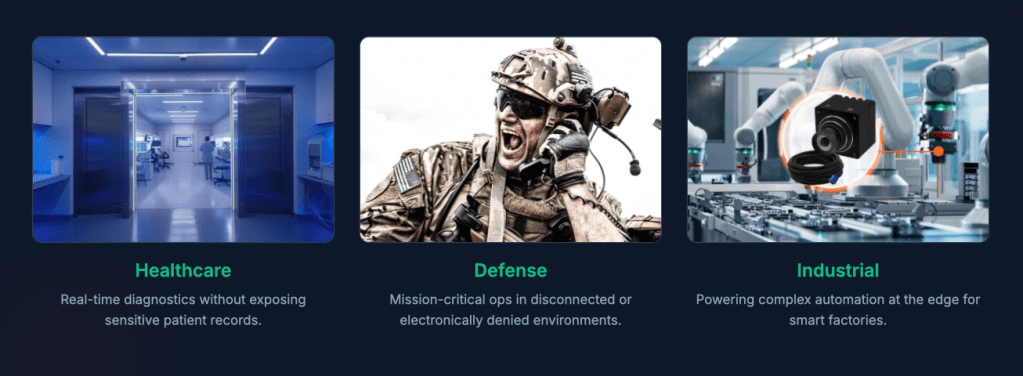

Across Industries, Beyond Constraints

One of the defining characteristics of this new paradigm is its adaptability. Because the architecture is not tied to a specific use case or vertical, it can be deployed across a wide range of industries:

- In healthcare, enabling real-time diagnostics without exposing patient data

- In defense, supporting operations in disconnected or denied environments

- In industrial systems, powering automation at the edge

- In enterprise environments, ensuring compliance and performance at scale

The common thread is not the industry – it’s the need for reliable, secure, and immediate intelligence. This is what makes AI truly universal.

Connectivity Is Optional. Intelligence Is Not.

The cloud will not disappear. It will continue to play a role in aggregation, coordination, and large-scale processing. But it will no longer be the default foundation for intelligence.

The future belongs to systems that can operate independently—systems that do not fail when connectivity does, systems that do not compromise when security matters most. In this future:

- Intelligence runs locally

- Data remains private

- Performance is immediate

- Systems are resilient by design

This is not an incremental improvement. It is a structural shift.

A New Standard for AI

As organizations rethink their approach to artificial intelligence, one question becomes central:

Where should intelligence live?

For years, the answer was “in the cloud.” Increasingly, the answer is changing. Closer. Faster. Safer. Local. The next generation of AI will not be defined by bigger models alone, but by where – and how – they operate. And the systems that embrace this shift will not just perform better. They will endure.

Key references

Rhode Island Current:

https://rhodeislandcurrent.com/2026/04/20/rhode-island-cant-wait-for-washington-to-fund-hospital-cybersecurity/

Paubox

https://www.paubox.com/blog/top-healthcare-data-breaches-of-2025-affect-over-29-million-so-far

MedCity News

https://medcitynews.com/2026/01/the-blast-radius-problem-what-the-2025-aws-outage-reveals-about-healthcares-cloud-fragility/

Censinet

https://censinet.com/perspectives/aws-outage-healthcare-wake-up-call

SecurityToday

https://securitytoday.com/articles/2026/04/28/us-healthcare-data-breach-crisis-impacts-millions.aspx

Leave a comment